Data Design in Software Engineering: A Complete Guide

Master data design in software engineering with proven strategies, best practices, and implementation techniques for scalable applications.

Modern software applications generate and consume vast amounts of information every second. The foundation for managing this complexity lies in thoughtful data design in software engineering, which determines how applications store, retrieve, and manipulate information throughout their lifecycle. Without proper data architecture, even the most elegant code becomes inefficient, unmaintainable, and prone to failure. Strategic data design transforms raw information into structured assets that drive business value, improve performance, and enable scalability for growing organizations.

Understanding the Fundamentals of Data Architecture

Data design in software engineering represents the systematic approach to organizing, structuring, and managing information within software systems. This discipline encompasses everything from choosing appropriate database models to defining relationships between entities and establishing data flow patterns across application layers.

The importance of solid data architecture cannot be overstated. Poor design decisions made early in development often create technical debt that compounds over time, leading to performance bottlenecks, security vulnerabilities, and expensive refactoring efforts. Conversely, well-designed data structures provide flexibility for future enhancements while maintaining system integrity.

Core Components of Effective Data Design

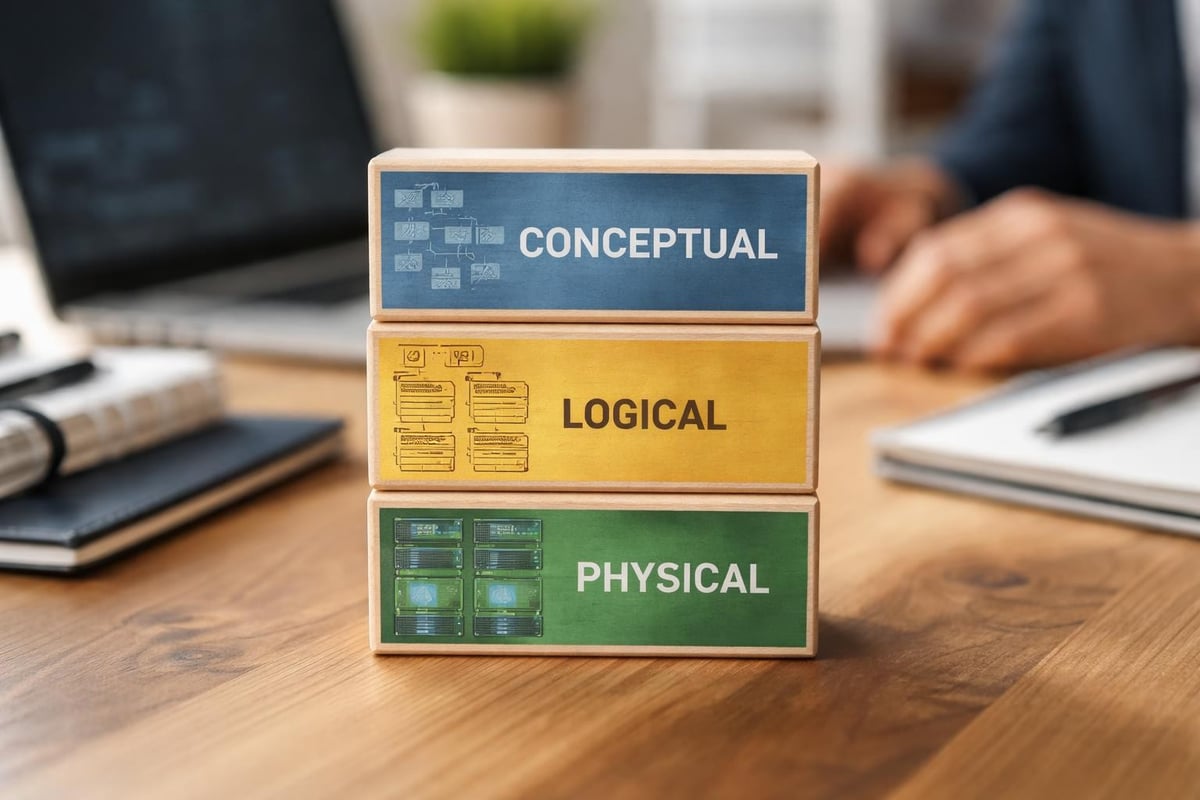

Successful data design in software engineering requires attention to several interconnected elements that work together to create robust systems:

- Conceptual models that capture business requirements and entity relationships

- Logical schemas that translate concepts into structured formats

- Physical implementations that optimize storage and retrieval patterns

- Data governance frameworks ensuring quality and compliance

- Integration patterns enabling seamless information exchange

Each component serves a distinct purpose while contributing to the overall system architecture. Understanding data engineering principles provides foundational knowledge for implementing these components effectively.

Strategic Approaches to Data Modeling

Data modeling represents the blueprint phase of data design in software engineering, where developers translate business requirements into structured representations. This process requires balancing normalization principles with practical performance considerations.

Normalization Versus Denormalization

Traditional database theory emphasizes normalization to eliminate redundancy and maintain data integrity. However, real-world applications often benefit from strategic denormalization that trades storage efficiency for query performance.

Approach | Benefits | Trade-offs | Best Use Cases |

|---|---|---|---|

Normalized | Data integrity, reduced redundancy | Complex joins, slower reads | Transactional systems, financial records |

Denormalized | Fast queries, simplified reads | Data duplication, update complexity | Analytics, reporting, read-heavy applications |

Hybrid | Balanced performance | Increased design complexity | Most production systems |

The best practices for data architecture design emphasize understanding workload characteristics before choosing optimization strategies. Modern applications frequently employ hybrid approaches, normalizing critical transactional data while denormalizing information for analytical purposes.

Entity Relationship Design

Defining clear relationships between data entities forms the backbone of maintainable systems. One-to-one, one-to-many, and many-to-many relationships each impose different constraints and require specific implementation patterns.

Strong entity relationship design prevents data anomalies and ensures referential integrity. Foreign key constraints, cascade operations, and transaction boundaries must be carefully considered during the design phase. When building custom software applications, establishing these relationships correctly from the start prevents costly refactoring later.

Implementing Data Design Patterns

Design patterns provide proven solutions to recurring data challenges in software engineering. Applying established patterns accelerates development while reducing the risk of architectural mistakes.

Repository Pattern for Data Access

The repository pattern abstracts data persistence logic, creating a clean separation between business logic and data access code. This pattern enables developers to switch underlying storage technologies without affecting application logic.

Implementing repositories involves creating interfaces that define data operations, then providing concrete implementations for specific storage systems. This approach supports testing through mock repositories and facilitates migration between databases as requirements evolve.

Event Sourcing and CQRS

Event sourcing captures all changes as a sequence of events rather than storing current state. Combined with Command Query Responsibility Segregation (CQRS), this pattern separates read and write operations, enabling independent optimization of each path.

These advanced patterns excel in scenarios requiring complete audit trails, complex business logic, or highly scalable read operations. However, they introduce additional complexity that must be justified by specific business requirements.

Data Quality and Validation Strategies

Quality data serves as the lifeblood of reliable software systems. Implementing comprehensive validation strategies prevents corrupt or inconsistent information from entering systems and compromising application integrity.

Input Validation and Sanitization

Every entry point into a system represents a potential source of invalid data. Robust validation occurs at multiple layers:

- Client-side validation providing immediate user feedback

- API-level validation enforcing business rules and constraints

- Database constraints serving as the final defense against invalid data

- Background validation identifying and correcting existing data issues

The importance of high-quality data in software development cannot be overstated, as poor data quality cascades through systems, affecting decision-making and user experience.

Data Type Selection and Constraints

Choosing appropriate data types affects storage efficiency, query performance, and application behavior. String lengths, numeric precision, date formats, and enumeration types must align with business requirements while providing adequate flexibility for future changes.

Database constraints including unique indexes, check constraints, and not-null requirements encode business rules directly into the data layer. This approach provides consistent enforcement regardless of which application layer performs the operation.

Scalability Considerations in Data Design

Scalability challenges emerge as applications grow in users, features, and data volume. Anticipating growth during initial data design in software engineering prevents costly architectural overhauls later.

Partitioning and Sharding Strategies

Horizontal partitioning divides large tables into smaller, more manageable segments based on specific criteria. Sharding extends this concept by distributing partitions across multiple database servers, enabling linear scaling of both storage capacity and query throughput.

Effective partitioning requires careful selection of partition keys that evenly distribute data and align with common query patterns. Poor partition key choices create hotspots where some partitions become overwhelmed while others remain underutilized.

Caching Layers and Data Replication

Strategic caching reduces database load by storing frequently accessed data in faster storage systems. Cache invalidation strategies ensure users receive current information while maintaining performance benefits.

Data replication improves both availability and read performance by maintaining synchronized copies across multiple servers. Replication strategies range from synchronous approaches guaranteeing consistency to asynchronous methods prioritizing availability and performance.

Security and Compliance in Data Architecture

Data security represents a critical dimension of data design in software engineering, particularly as regulations like GDPR and CCPA impose strict requirements on data handling. Security considerations must be integrated into initial design rather than retrofitted later.

Encryption and Access Control

Data encryption protects sensitive information both at rest and in transit. Modern applications typically employ column-level encryption for highly sensitive fields, full database encryption for comprehensive protection, and transport layer security for data in motion.

Role-based access control (RBAC) restricts data access based on user permissions and organizational roles. Implementing granular access controls at the data layer provides defense in depth, complementing application-level security measures. Organizations pursuing quality certifications must demonstrate robust security controls throughout their data architecture.

Audit Trails and Data Lineage

Comprehensive audit trails track who accessed or modified data, when changes occurred, and what information was altered. This capability supports regulatory compliance, security investigations, and troubleshooting production issues.

Data lineage extends audit capabilities by documenting how information flows through systems, transformations applied, and dependencies between datasets. Understanding data lineage becomes essential as applications grow more complex and interconnected.

Performance Optimization Techniques

Query performance directly impacts user experience and system efficiency. Optimizing data design in software engineering for performance requires understanding database internals, indexing strategies, and query execution patterns.

Indexing Strategies

Database indexes accelerate data retrieval by creating optimized lookup structures. However, indexes impose costs during write operations and consume additional storage space.

- B-tree indexes supporting range queries and sorted results

- Hash indexes optimizing exact-match lookups

- Full-text indexes enabling complex search capabilities

- Spatial indexes accelerating geographic queries

- Composite indexes optimizing multi-column queries

Index selection requires analyzing query patterns, understanding cardinality, and balancing read performance against write overhead. The software design documentation should explicitly define indexing strategies and their rationale.

Query Optimization

Beyond indexing, query optimization involves writing efficient SQL, avoiding N+1 query problems, and leveraging database-specific features. Query execution plans reveal how databases process operations, identifying opportunities for improvement.

Batch operations reduce round-trip overhead when processing multiple records. Eager loading prevents cascading queries in object-relational mapping scenarios. Connection pooling minimizes the cost of establishing database connections.

Modern Data Architecture Patterns

Contemporary applications increasingly adopt polyglot persistence, using different database technologies for different use cases within the same system. This approach selects optimal storage solutions for specific workload characteristics.

Microservices and Data Ownership

Microservices architecture assigns data ownership to individual services, creating bounded contexts that encapsulate related functionality and information. Each service maintains its own database, avoiding shared database anti-patterns that create coupling between services.

This approach introduces challenges around data consistency, distributed transactions, and cross-service queries. Event-driven architectures and eventual consistency patterns address these challenges while maintaining service independence.

Cloud-Native Data Solutions

Cloud platforms offer managed database services that reduce operational overhead while providing built-in scalability, backup, and disaster recovery capabilities. Serverless databases auto-scale based on demand, eliminating capacity planning challenges.

However, cloud adoption requires careful consideration of data sovereignty, vendor lock-in, and cost optimization. Designing for cloud portability protects against future migration needs while leveraging cloud-specific advantages.

Testing and Validation of Data Designs

Validating data architecture requires both functional testing ensuring correct behavior and non-functional testing verifying performance, scalability, and reliability characteristics.

Data Migration Testing

Testing data migrations prevents production disasters when moving between schema versions or database platforms. Migration testing validates that transformations preserve data integrity, maintain referential relationships, and handle edge cases correctly.

Automated migration tests run against representative datasets, verifying that rollback procedures function correctly and that performance remains acceptable during migration windows.

Load and Stress Testing

Performance testing under realistic load conditions reveals bottlenecks, capacity limits, and scaling characteristics. Gradually increasing load identifies the point where systems degrade, informing capacity planning and optimization priorities.

Stress testing pushes systems beyond expected limits, verifying graceful degradation and recovery capabilities. These tests expose race conditions, deadlocks, and resource exhaustion issues that only manifest under extreme conditions.

Documentation and Knowledge Transfer

Comprehensive documentation preserves design decisions, explains trade-offs, and facilitates knowledge transfer as teams evolve. The importance of documentation in maintaining software quality extends throughout the development lifecycle.

Effective data design documentation includes entity relationship diagrams, data dictionaries defining field meanings and constraints, migration histories tracking schema evolution, and performance benchmarks establishing baseline expectations.

Living documentation that evolves alongside systems provides more value than static documents that quickly become outdated. Teams implementing spec-driven development maintain formal specifications as authoritative references for data structures and behaviors.

Integration with Development Workflows

Data design in software engineering integrates with broader development processes through version control, continuous integration, and deployment automation. Database schema changes flow through the same review and testing processes as application code.

Schema migration tools enable incremental, reversible changes that deploy safely across environments. Feature flags allow testing new data structures in production without affecting existing functionality. Blue-green deployments minimize downtime during database updates.

Version controlling database schemas alongside application code ensures consistency and enables rollback capabilities. Migration scripts become part of the deployment pipeline, automatically applying changes during releases. Understanding how data design supports software engineering clarifies its role within modern development practices.

Future Trends in Data Architecture

Emerging technologies and methodologies continue reshaping data design approaches. Graph databases gain adoption for highly connected data, offering intuitive modeling of complex relationships. Time-series databases optimize storage and querying of timestamped data common in IoT and monitoring applications.

Machine learning integration requires data architectures supporting feature engineering, model training, and real-time inference. Data versioning and reproducibility become essential for maintaining model quality and regulatory compliance.

Distributed ledger technologies introduce new patterns for maintaining tamper-proof records across organizational boundaries. While blockchain solutions impose performance constraints, they enable novel use cases requiring decentralized trust.

The evolution toward data mesh architectures decentralizes data ownership, treating data as products managed by domain teams. This organizational pattern aligns data responsibility with business knowledge, improving quality and relevance while maintaining governance standards.

Thoughtful data design in software engineering creates the foundation for scalable, maintainable, and high-performance applications. By applying proven patterns, anticipating growth, and prioritizing data quality, development teams build systems that deliver lasting business value. Whether launching a new digital product or evolving existing applications, Vicedomini Softworks brings deep expertise in data architecture and custom software development, partnering with startups and established companies to transform ideas into production-ready solutions that scale with business growth.